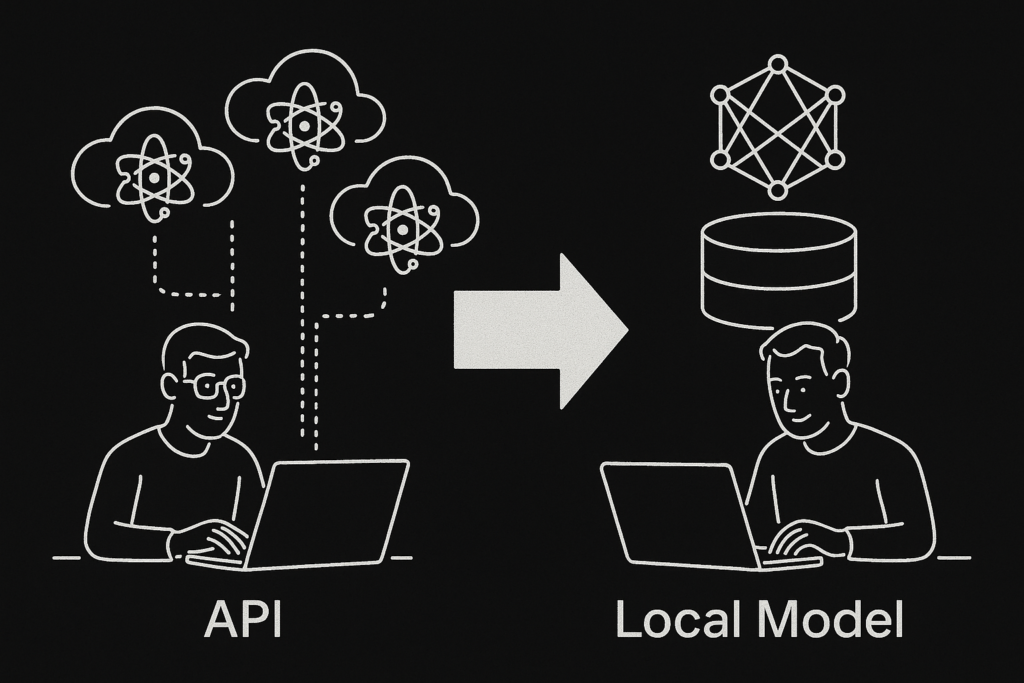

I used to build AI apps the default way — hitting APIs from OpenAI, Anthropic, and others. It worked fine. The models were powerful, the setup was fast, and I could prototype quickly. But there was a ceiling: every experiment had a price tag, and every insight came through a black-box interface. I didn’t really understand what was happening under the hood. I was paying for access, not learning how it all worked.

Now I’ve shifted to running local models like Mistral using Ollama, and everything has changed. I can run the model on my own machine, iterate freely, and build without constraints. No API limits. No billing anxiety. Just full control over the system. What used to be abstract is now something I can inspect, tweak, and deeply grasp.

RAG — which once felt like a fragile bridge between local vector stores and remote model calls — now runs entirely locally. Embeddings, context injection, generation — all handled on my machine. The setup is faster, the iteration cycle is tighter, and I’m finally learning how this stuff actually works by doing it, not outsourcing it.

This isn’t just about saving cost. It’s about unlocking leverage. Local-first AI puts builders back in the driver’s seat. When you can run the models, understand the outputs, and own the stack end to end, you’re no longer just using AI — you’re shaping it.